FROM PILOTS TO PROFIT

Build a $70K Waste Prevention Plan

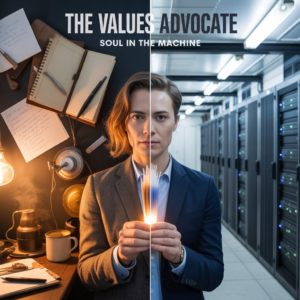

You aren’t afraid of the technology. You are afraid of losing the humanity in the work.

You believe that writing isn’t just about output—it’s about thinking. To you, handing the creative process over to an algorithm feels like a betrayal of your craft. You worry about copyright, about the environment, or simply that we are flooding the world with ‘slop.’

You aren’t being difficult; you are being principled. If you refuse to use AI because it feels like ‘selling out,’ you fit the profile we call The Values Advocate.

Summary: The Values Advocate resists based on Moral or Professional Identity. Their skepticism isn’t about whether the tool works; it’s about whether using it aligns with their standards. They often act as the “conscience” of the team.

Behavioral Tendencies:

- The “Soul” Argument: They dismiss AI outputs as “robotic,” “soulless,” or “generic,” refusing to edit them because “it’s harder to fix bad AI writing than to write from scratch.”

- Ethical Gatekeeping: They raise valid concerns about data privacy, copyright, or bias as reasons to block adoption entirely.

- Process Protection: They insist on manual workflows (e.g., brainstorming on a whiteboard) to protect the “integrity” of the creative process.

If this sounds like you, here are simple ways to get unstuck:

Your thought process: You value the struggle of creation. You believe that shortcuts erode skill.

- The “Camera” Analogy: A camera didn’t kill painting; it freed artists to do abstract work. Treat AI as a lens. It captures the scene (the data/draft), but you provide the focus and the framing.

- Automate the Drudgery, Protect the Art: Use AI for the tasks that kill your soul (scheduling, summarizing, formatting) so you have more energy for the tasks that feed your soul (strategy, creative writing).

- Be the Curator: In a world of infinite AI content, the human with the best “taste” wins. Your high standards make you the best person to use AI, because you won’t let the mediocre stuff slide.

As a Manager / Team Lead, here’s how you can help:

- Respect the Boundary: Do not force this person to use AI for their “core craft” (e.g., don’t ask your lead writer to generate blog posts). Ask them to use it for peripheral tasks (research, outlines).

- The “Human-in-the-Loop” Guarantee: Explicitly agree that no AI work goes to a client without their “Human Stamp of Approval”. This protects their sense of professional duty.

- Values Alignment: If they worry about ethics, give them the job of “AI Ethics Lead.” Have them audit the tools to ensure data safety. Turn their resistance into a governance role.

How organizations can remove the “Values Barrier”:

- Transparent Labeling: Adopt a “Nutrition Label” policy for content (e.g., “AI Assisted / Human Verified”). This satisfies the Advocate’s need for honesty.

- Define “The Red Line”: Publish a clear list of what is sacred (human-only) vs. what is commodity (AI-allowed). For example: “We use AI for internal memos; we never use AI for client apologies.”

- Focus on Augmentation, Not Automation: Change the narrative from “doing more with less” to “doing better work.” The Values Advocate will only adopt AI if they believe it raises the quality ceiling, not just the floor.

Learn more about the 8 AI Adoption Profiles. Not sure which profile describes you? Take our quick 5 minute assessment.

Subscribe to our newsletter